Test Automation of SolutionBuilder applications: Getting Started

Prerequisites

- Install latest NodeJs version. It will come along with npm.

- Install git.

- Install Java Runtime Environment.

Preparations

- Unpack e2e archive from <InstallationFolder>/Test/e2e.zip to c:\dev\e2e\ (Ex. c:\dev\custom\root\Test\e2e.zip)

- Open a command prompt as administrator.

- Install npm dependencies

npm install

- Execute the following command under Administration permissions.

When asked for credentials - enter credentials of an administration role user in installed system.

This will create test users for your tests and some other needed data.npm run create-data

- In c:\dev\e2e\CustomData.js file parameters for Test Execution are configured:

- Host for testing

- Browser that will be used for testing

- Tests that will be executed

- Many other options

Here is an example how this file may look like

module.exports = { capability: 'chrome', // browser to run tests: 'chrome', 'firefox'... others support to be added host: 'yourPC.matrix42.de', // app server specs: [ 'spec/sample-spec1.js', //relative path to a spec file 'spec/sample-spec2.js' //relative path to a spec file ] }; -

Collect system objects from installed system (e.g. Applications, Dialogs, Wizards, Actions etc.)

npm run collect

Basics

-

Tests uses Selenium WebDriver to control browsers.

-

As most of the operations related to browser interaction are asynchronous by themselves - async/await approach should be used while coding the test.

-

There are several global objects that will help to write tests:

- Applications - contains all available applications with it's objects (e.g. Previews, Dialogs, Actions, Navigation Items etc.)

- Utils - contains different helpers to make development easier.

- Consts - contains different constants and blocks of constants that will help to write more readable test code.

- Collected - contains different useful system objects collected from target system

All these objects are subjects to change and extend in future versions of test framework as a natural improvements.

While writing test code if You are looking for a name of a Preview, Action etc - You may find it in administration application in a name field of an object You want to use.

Also name suggestions should popup if use IDE

Write e2e story

Create a new file spec/sample-spec1.js for your e2e story - a new test suite.

As a sample we'll look at a Problem CRUD tests.

'use strict'; // Function 'describe' defines a separate test suite // First parameter is a description of a test suite // Second parameter is an function that implements the test suite describe('Problems CRUD', () => { const search = Utils.search; // Search utils helps to find needed objects const notification = Utils.notification; // Notification utils are designed to handle different notification popups const browserUtils = Utils.browser; // Browser utils aggregates different methods to manipulate browser being run const serviceDeskApp = Applications.ServiceDesk; // Creating a shorthand to a ServiceDesk application // Creators are helpers that allow to create/update/delete objects without using UI but in a manner user does it in UI. // Here we create a new instance of Problem creator const problemCreator = new Utils.creators.Problem(); // Next block shows how to get dialog, preview for a Problem entity. // In that way it is possible to get any available application's dialog, preview or wizard const problemDialog = serviceDeskApp.Dialogs.ProblemsDialog; const problemPreview = serviceDeskApp.Previews.PreviewProblems; // Getting generic delete wizard const deleteWizard = serviceDeskApp.Wizards.DeleteObjects; // Let's create a unique names of Problem objects for our cases. // Creators have a 'mask' property that contains a unique sequence of characters per each creator instance const uniqueCreateKey = problemCreator.mask + 'C'; const uniqueEditKey = problemCreator.mask + 'U'; const uniqueDeleteKey = problemCreator.mask + 'D'; // 'beforeAll' function will be executed before all the tests // there is also a possibility to perform operations before each test case - use function 'beforeEach' for that beforeAll(async () => { // Using creator we create a new Problem object // It will have // Impact: User, Description // Description: Test_Description // Subject: taken from uniqueEditKey variable await problemCreator.withImpact('User').withDescription('Test_Description').create(uniqueEditKey); // Create another Problem object to check delete functionality await problemCreator.create(uniqueDeleteKey); // Logging in as a user with Service Desk agent role. Other options are also possible e.g. signInAsAdmin, signInAsEndUser etc await Utils.login.signInAsAgent(); // Open Service Desk Application await serviceDeskApp.open(); // Click on Problems navigation item await serviceDeskApp.NavigationItems.Problems.click(); // As Problems navigation item has a default filter we need to clock on it again to see all the Problems await serviceDeskApp.NavigationItems.Problems.click(); }); // 'afterAll' function will be executed after all the tests executed (even if they are failed) // there is also a possibility to perform operations after each test case - use function 'afterEach' for that afterAll(() => { // Using a creator we delete all Problems that contain problemCreator.mask within their subjects problemCreator.deleteAllByMask(problemCreator.mask); }); // Function 'it' defines a separate test case // First parameter is a description of a test case // Second parameter is an async function that implements the test case it('Agent should be able to create new Problem', async () => { // Click on action 'Add Problem' - a create problem dialog will be opened await serviceDeskApp.SearchPages.Problems.Actions.AddProblem.click(); // Now a create problem dialog is opened // Set value from uniqueCreateKey into Subject field await problemDialog.GeneralTab.Subject.setValue(uniqueCreateKey); // Clearing SLA picker await problemDialog.GeneralTab.SLA.clear(); // browserUtils.waitAngular is waiting for angular background processes // It is needed here because changing SLA forces different recalculations on a background await browserUtils.waitAngular(); // Setting a new SLA by finding it in picker by name await problemDialog.GeneralTab.SLA.selectItemByText('Problems Service Level Agreement'); // Setting description to Description: mx-at-Problem-1 await problemDialog.GeneralTab.DescriptionHTML.setValue('Description: mx-at-Problem-1'); // Select Impact 'User' await problemDialog.GeneralTab.Impact.selectItemByText('User'); // Waiting for message about successful operation completion after click on Done button await notification.clickWithSuccessCheck(problemDialog.Actions.Done, true); // Find a new object in grid and open it's preview await search.find(uniqueCreateKey); // Now we check if we have a proper values of SLA and Impact expect(await problemPreview.SLA.getValue()).toBe('Problems Service Level Agreement'); expect(await problemPreview.Impact.getValue()).toBe('User'); }); // Edit Problem test case it('Agent should able to edit Problem ', async () => { // Find a Problem with a subject from a variable 'uniqueEditKey' in grid and open it's preview await search.find(uniqueEditKey); // Check the values of impact and description on a Preview expect(await problemPreview.Impact.getValue()).toBe('User'); expect(await problemPreview.DescriptionHTML.getValue()).toBe('Test_Description'); // Click on Edit action (activities have a special Edit action 'EditActivity' - in most cases iw will be called 'Edit') await problemPreview.Actions.EditActivity.click(); // Check subject, impact and description on a dialog side expect(await problemDialog.GeneralTab.Subject.getValue()).toBe(uniqueEditKey); expect(await problemDialog.GeneralTab.Impact.getValue()).toBe('User'); expect(await problemDialog.GeneralTab.DescriptionTab.DescriptionHTML.getValue()).toBe('Test_Description'); // Add '_Updated' suffix to a subject await problemDialog.GeneralTab.Subject.setValue(uniqueEditKey + '_Updated'); // Clear SLA await problemDialog.GeneralTab.SLA.clear(); // Wait for background processes await browserUtils.waitAngular(); // Set a new SLA: 'Problems Operation Level Agreement' await problemDialog.GeneralTab.SLA.selectItemByText('Problems Operation Level Agreement'); // Set new description to 'Test_Description_Updated' await problemDialog.GeneralTab.DescriptionTab.DescriptionHTML.setValue('Test_Description_Updated'); // Set impact to Workgroup await problemDialog.GeneralTab.Impact.selectItemByText('Workgroup'); // Waiting for message about successful operation completion after click on Save button await notification.clickWithSuccessCheck(problemDialog.Actions.Save, true); // Check if subject, SLA and impact are saved properly expect(await problemDialog.GeneralTab.Subject.getValue()).toBe(uniqueEditKey + '_Updated'); expect(await problemDialog.GeneralTab.SLA.getValue()).toBe('Problems Operation Level Agreement'); expect(await problemDialog.GeneralTab.Impact.getValue()).toBe('Workgroup'); // Waiting for message about successful operation completion after click on Done button await notification.clickWithSuccessCheck(problemDialog.Actions.Done, true); // Check if SLA and impact show correct values on Preview expect(await problemPreview.SLA.getValue()).toBe('Problems Operation Level Agreement'); expect(await problemPreview.Impact.getValue()).toBe('Workgroup'); }); // Delete Problem test case it('Agent should able to delete Problem', async () => { // Find a Problem with a subject from a variable 'uniqueDeleteKey' in grid and open it's preview await search.find(uniqueDeleteKey); // Click on action delete await problemPreview.Actions.DeleteObjects.click(); // Delete wizard is shown // Click on Finish button and wait for success message await notification.clickWithSuccessCheck(deleteWizard.DeleteStep.Finish); // Search for a Problem with a subject from a variable 'uniqueDeleteKey' and expect it is not found expect(await search.isAnyItemFound(uniqueDeleteKey)).toBe(false); }); });

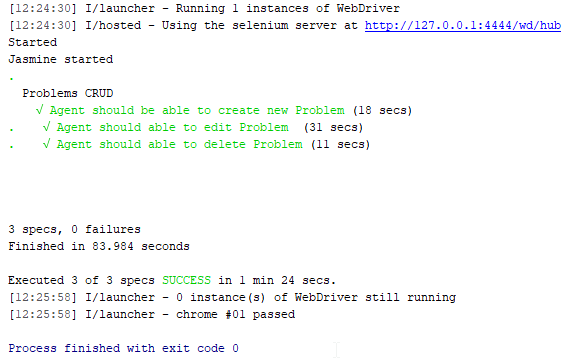

After running this test suite logs should look like

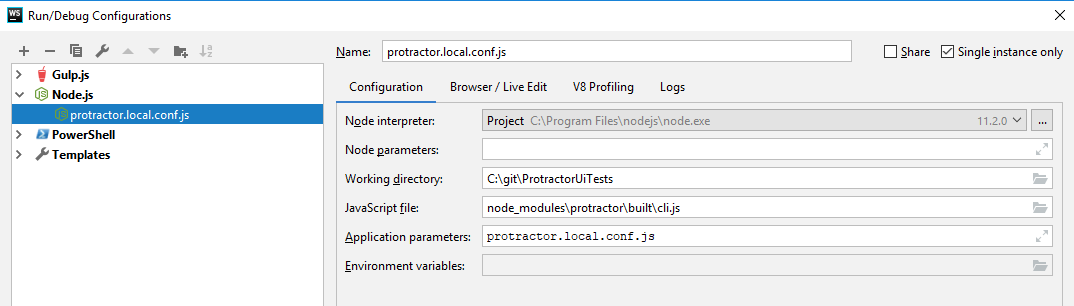

Run/debug tests

It's important to run E2E-tests without DEV-certificates. They can be found in "Certificates/" folder by "m42InternalDEV" text inside relevant certificate files.

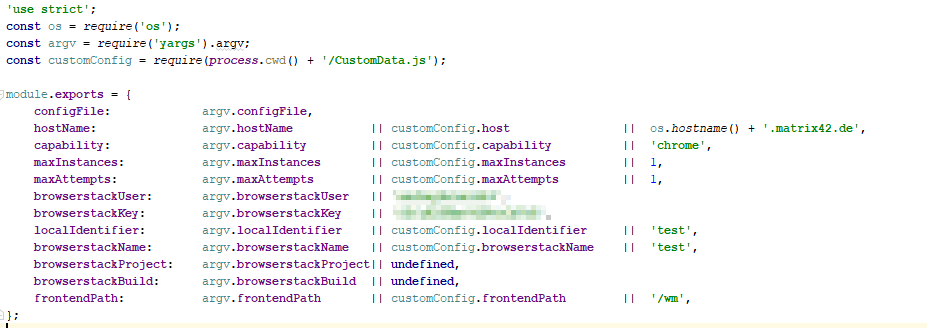

There are different options to run tests, many of them are configurable and defined in Configuration/arguments.js file.

One may configure run either with CustomData.js or with a command prompt parameters. They also have default values.

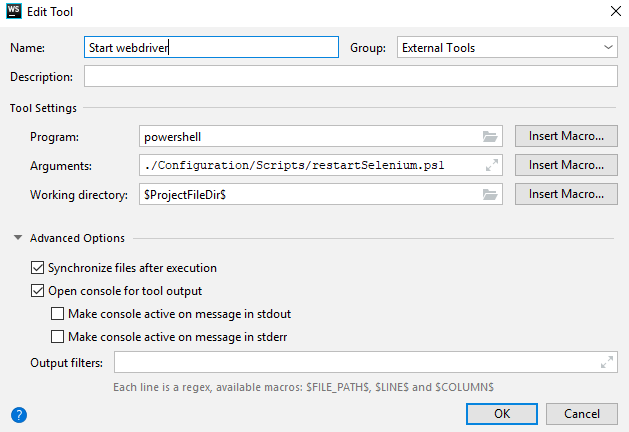

To run tests one may run

powershell ./Configuration/Scripts/restartSelenium.ps1 node node_modules\protractor\built\cli.js protractor.local.conf.js

It is also possible to run/debug tests within IDE. Here is the sample configuration to run under WebStorm

In a section "Before launch": add "Run External tool":

Results

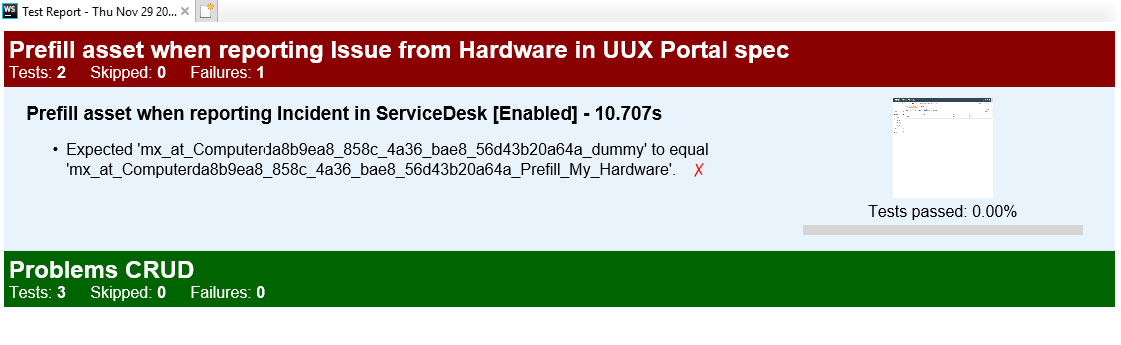

Final Results can be found in file Report/htmlReport.html

Only failed test cases will be displayed in details - for passed - only numbers.

It will look like that (a sample report)

Failed cases screenshots are clickable but they are taken at the end of a test case. Not on assertion fail.

Report folder is not cleared by default so You'll need to delete it manually if You want a clean report.